Introduction

There have been many experiments with the sizes of computers, some of which have stayed around and some have gone away. The trend has been to make computers smaller, the early computers had buildings for them. Recently for come classes computers have started becoming as small as could be reasonably desired. For example phones are thin enough that they can blow away in a strong breeze, smart watches are much the same size as the old fashioned watches they replace, and NUC type computers are as small as they need to be given the size of monitors etc that they connect to.

This means that further development in the size and shape of computers will largely be determined by human factors.

I think we need to consider how computers might be developed to better suit humans and how to write free software to make such computers usable without being constrained by corporate interests.

Those of us who are involved in developing OSs and applications need to consider how to adjust to the changes and ideally anticipate changes. While we can t anticipate the details of future devices we can easily predict general trends such as being smaller, higher resolution, etc.

Desktop/Laptop PCs

When home computers first came out it was standard to have the keyboard in the main box, the Apple ][ being the most well known example. This has lost popularity due to the demand to have multiple options for a light keyboard that can be moved for convenience combined with multiple options for the box part. But it still pops up occasionally such as the

Raspberry Pi 400 [1] which succeeds due to having the computer part being small and light. I think this type of computer will remain a niche product. It could be used in a add a screen to make a laptop as opposed to the add a keyboard to a tablet to make a laptop model but a tablet without a keyboard is more useful than a non-server PC without a display.

The PC as box with connections for keyboard, display, etc has a long future ahead of it. But the sizes will probably decrease (they should have stopped making PC cases to fit CD/DVD drives at least 10 years ago). The NUC size is a useful option and I think that DVD drives will stop being used for software soon which will allow a range of smaller form factors.

The regular laptop is something that will remain useful, but the tablet with detachable keyboard devices could take a lot of that market. Full functionality for all tasks requires a keyboard because at the moment

text editing with a touch screen is an unsolved problem in computer science [2].

The

Lenovo Thinkpad X1 Fold [3] and related Lenovo products are very interesting. Advances in materials allow laptops to be thinner and lighter which leaves the screen size as a major limitation to portability. There is a conflict between desiring a large screen to see lots of content and wanting a small size to carry and making a device foldable is an obvious solution that has recently become possible. Making a foldable laptop drives a desire for not having a permanently attached keyboard which then makes a touch screen keyboard a requirement. So this means that user interfaces for PCs have to be adapted to work well on touch screens. The Think line seems to be continuing the history of innovation that it had when owned by IBM. There are also a range of other laptops that have two regular screens so they are essentially the same as the Thinkpad X1 Fold but with two separate screens instead of one folding one, prices are as low as $600US.

I think that the typical interfaces for desktop PCs (EG MS-Windows and KDE) don t work well for small devices and touch devices and the Android interface generally isn t a good match for desktop systems. We need to invent more options for this. This is not a criticism of KDE, I use it every day and it works well. But it s designed for use cases that don t match new hardware that is on sale. As an aside it would be nice if Lenovo gave samples of their newest gear to people who make significant contributions to GUIs. Give a few Thinkpad Fold devices to KDE people, a few to GNOME people, and a few others to people involved in Wayland development and see how that promotes software development and future sales.

We also need to adopt features from laptops and phones into desktop PCs. When voice recognition software was first released in the 90s it was for desktop PCs, it didn t take off largely because it wasn t very accurate (none of them recognised my voice). Now voice recognition in phones is very accurate and it s very common for desktop PCs to have a webcam or headset with a microphone so it s time for this to be re-visited. GPS support in laptops is obviously useful and can work via Wifi location, via a USB GPS device, or via wwan mobile phone hardware (even if not used for wwan networking). Another possibility is using the same software interfaces as used for GPS on laptops for a static definition of location for a desktop PC or server.

The Interesting New Things

Watch Like

The

wrist-watch [4] has been a standard format for easy access to data when on the go since it s military use at the end of the 19th century when the practical benefits beat the supposed femininity of the watch. So it seems most likely that they will continue to be in widespread use in computerised form for the forseeable future. For comparison smart phones have been in widespread use as pocket watches for about 10 years.

The question is how will watch computers end up? Will we have Dick Tracy style watch phones that you speak into? Will it be the current smart watch functionality of using the watch to answer a call which goes to a bluetooth headset? Will smart watches end up taking over the functionality of the

calculator watch [5] which was popular in the 80 s? With today s technology you could easily have a fully capable PC strapped to your forearm, would that be useful?

Phone Like

Folding phones (originally popularised as Star Trek Tricorders) seem likely to have a long future ahead of them. Engineering technology has only recently developed to the stage of allowing them to work the way people would hope them to work (a folding screen with no gaps).

Phones and tablets with multiple folds are coming out now [6]. This will allow phones to take much of the market share that tablets used to have while tablets and laptops merge at the high end.

I ve previously written about Convergence between phones and desktop computers [7], the increased capabilities of phones adds to the case for Convergence.

Folding phones also provide new possibilities for the OS. The Oppo OnePlus Open and the Google Pixel Fold both have a UI based around using the two halves of the folding screen for separate data at some times. I think that the current user interfaces for desktop PCs don t properly take advantage of multiple monitors and the possibilities raised by folding phones only adds to the lack. My pet peeve with multiple monitor setups is when they don t make it obvious which monitor has keyboard focus so you send a CTRL-W or ALT-F4 to the wrong screen by mistake, it s a problem that also happens on a single screen but is worse with multiple screens. There are rumours of phones described as three fold (where three means the number of segments with two folds between them), it will be interesting to see how that goes.

Will phones go the same way as PCs in terms of having a separation between the compute bit and the input device? It s quite possible to have a compute device in the phone form factor inside a secure pocket which talks via Bluetooth to another device with a display and speakers. Then you could change your phone between a phone-size display and a tablet sized display easily and when using your phone a thief would not be able to easily steal the compute bit (which has passwords etc). Could the watch part of the phone (strapped to your wrist and difficult to steal) be the active part and have a tablet size device as an external display? There are already announcements of smart watches with up to 1GB of RAM (same as the Samsung Galaxy S3), that s enough for a lot of phone functionality.

The

Rabbit R1 [8] and the

Humane AI Pin [9] have some interesting possibilities for AI speech interfaces. Could that take over some of the current phone use? It seems that visually impaired people have been doing badly in the trend towards touch screen phones so an option of a voice interface phone would be a good option for them. As an aside I hope some people are working on AI stuff for FOSS devices.

Laptop Like

One interesting PC variant I just discovered is the

Higole 2 Pro portable battery operated Windows PC with 5.5 touch screen [10]. It looks too thick to fit in the same pockets as current phones but is still very portable. The version with built in battery is $AU423 which is in the usual price range for low end laptops and tablets. I don t think this is the future of computing, but it is something that is usable today while we wait for foldable devices to take over.

The recent release of the

Apple Vision Pro [11] has driven interest in 3D and head mounted computers. I think this could be a useful peripheral for a laptop or phone but it won t be part of a primary computing environment. In 2011 I wrote about

the possibility of using augmented reality technology for providing a desktop computing environment [12]. I wonder how a Vision Pro would work for that on a train or passenger jet.

Another interesting thing that s on offer is a

laptop with 7 touch screen beside the keyboard [13]. It seems that someone just looked at what parts are available cheaply in China (due to being parts of more popular devices) and what could fit together. I think a keyboard should be central to the monitor for serious typing, but there may be useful corner cases where typing isn t that common and a touch-screen display is of use. Developing a range of strange hardware and then seeing which ones get adopted is a good thing and an advantage of Ali Express and Temu.

Useful Hardware for Developing These Things

I recently bought a second hand Thinkpad X1 Yoga Gen3 for $359 which has stylus support [14], and it s generally a great little laptop in every other way. There s a common failure case of that model where touch support for fingers breaks but the stylus still works which allows it to be used for testing touch screen functionality while making it cheap.

The PineTime is a nice smart watch from Pine64 which is designed to be open [15]. I am quite happy with it but haven t done much with it yet (apart from wearing it every day and getting alerts etc from Android). At $50 when delivered to Australia it s significantly more expensive than most smart watches with similar features but still a lot cheaper than the high end ones. Also the

Raspberry Pi Watch [16] is interesting too.

The PinePhonePro is an OK phone made to open standards but it s hardware isn t as good as Android phones released in the same year [17]. I ve got some useful stuff done on mine, but the battery life is a major issue and the screen resolution is low. The

Librem 5 phone from Purism has a better hardware design for security with switches to disable functionality [18], but it s even slower than the PinePhonePro. These are good devices for test and development but not ones that many people would be excited to use every day.

Wwan hardware (for accessing the phone network) in M.2 form factor can be obtained for free if you have access to old/broken laptops. Such devices start at about $35 if you want to buy one. USB GPS devices also start at about $35 so probably not worth getting if you can get a wwan device that does GPS as well.

What We Must Do

Debian appears to have some voice input software in the pocketsphinx package but no documentation on how it s to be used. This would be a good thing to document, I spent 15 mins looking at it and couldn t get it going.

To take advantage of the hardware features in phones we need software support and we ideally don t want free software to lag too far behind proprietary software which IMHO means the typical Android setup for phones/tablets.

Support for changing screen resolution is already there as is support for touch screens. Support for adapting the GUI to changed screen size is something that needs to be done even today s hardware of connecting a small laptop to an external monitor doesn t have the ideal functionality for changing the UI. There also seem to be some limitations in touch screen support with multiple screens, I haven t investigated this properly yet, it definitely doesn t work in an expected manner in Ubuntu 22.04 and I haven t yet tested the combinations on Debian/Unstable.

ML is becoming a big thing and it has some interesting use cases for small devices where a smart device can compensate for limited input options. There s a lot of work that needs to be done in this area and we are limited by the fact that we can t just rip off the work of other people for use as training data in the way that corporations do.

Security is more important for devices that are at high risk of theft. The vast majority of free software installations are way behind Android in terms of security and we need to address that. I have some ideas for improvement but there is always a conflict between security and usability and while Android is usable for it s own special apps it s not usable in a I want to run applications that use any files from any other applicationsin any way I want sense. My post about

Sandboxing Phone apps is relevant for people who are interested in this [19]. We also need to extend security models to cope with things like ok google type functionality which has the potential to be a bug and the emerging class of LLM based attacks.

I will write more posts about these thing.

Please write comments mentioning FOSS hardware and software projects that address these issues and also documentation for such things.

Happy to share that ciw is now on CRAN! I had tooted a little bit

about it, e.g., here.

What it provides is a single (efficient) function

Happy to share that ciw is now on CRAN! I had tooted a little bit

about it, e.g., here.

What it provides is a single (efficient) function

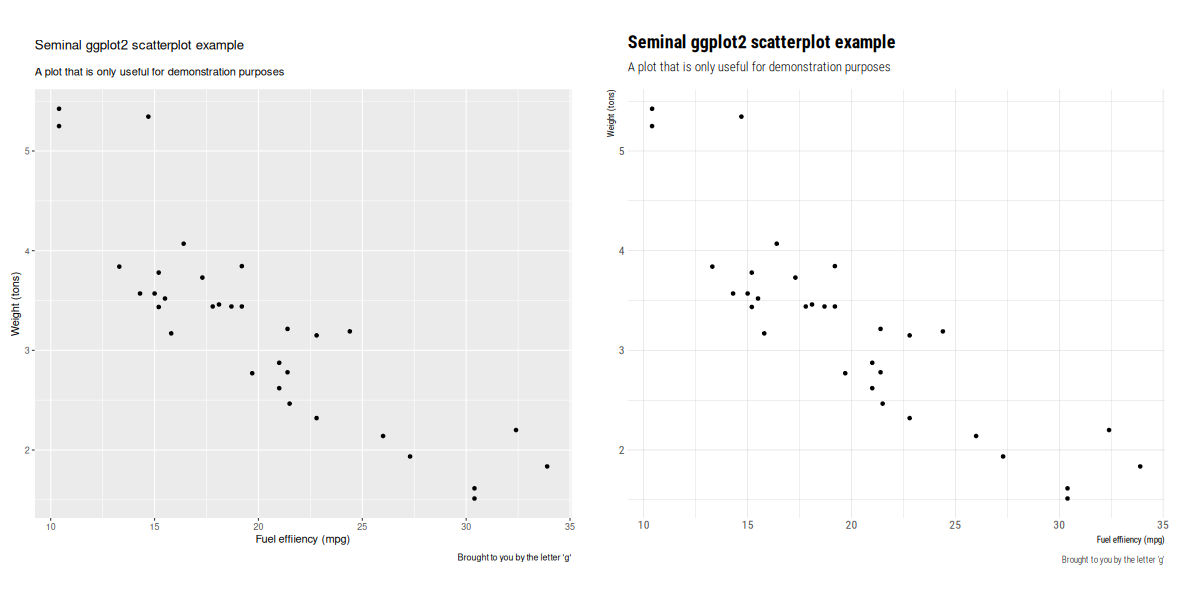

The image here comes from an example of building

The image here comes from an example of building

My brain is currently suffering from an overload caused by grading student

assignments.

In search of a somewhat productive way to procrastinate, I thought I

would share a small script I wrote sometime in 2023 to facilitate my grading

work.

I use Moodle for all the classes I teach and students use it to hand me out

their papers. When I'm ready to grade them, I download the ZIP archive Moodle

provides containing all their PDF files and comment them

My brain is currently suffering from an overload caused by grading student

assignments.

In search of a somewhat productive way to procrastinate, I thought I

would share a small script I wrote sometime in 2023 to facilitate my grading

work.

I use Moodle for all the classes I teach and students use it to hand me out

their papers. When I'm ready to grade them, I download the ZIP archive Moodle

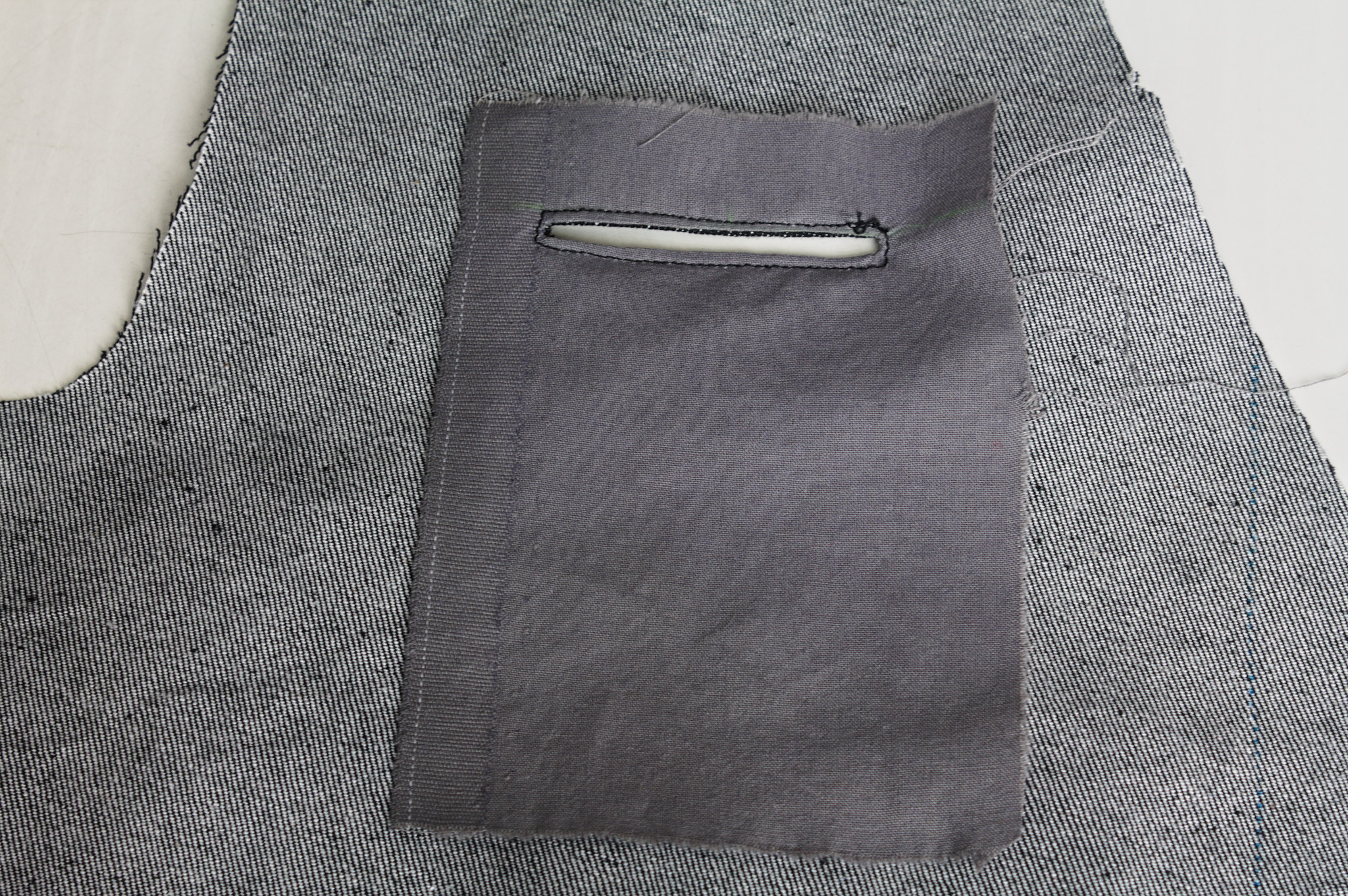

provides containing all their PDF files and comment them  I had finished sewing my jeans, I had a scant 50 cm of elastic denim

left.

Unrelated to that, I had just finished drafting a vest with Valentina,

after

I had finished sewing my jeans, I had a scant 50 cm of elastic denim

left.

Unrelated to that, I had just finished drafting a vest with Valentina,

after

The other thing that wasn t exactly as expected is the back: the pattern

splits the bottom part of the back to give it sufficient spring over

the hips . The book is probably published in 1892, but I had already

found when drafting the foundation skirt that its idea of hips

includes a bit of structure. The enough steel to carry a book or a cup

of tea kind of structure. I should have expected a lot of spring, and

indeed that s what I got.

To fit the bottom part of the back on the limited amount of fabric I had

to piece it, and I suspect that the flat felled seam in the center is

helping it sticking out; I don t think it s exactly bad, but it is

a peculiar look.

Also, I had to cut the back on the fold, rather than having a seam in

the middle and the grain on a different angle.

Anyway, my next waistcoat project is going to have a linen-cotton lining

and silk fashion fabric, and I d say that the pattern is good enough

that I can do a few small fixes and cut it directly in the lining, using

it as a second mockup.

As for the wrinkles, there is quite a bit, but it looks something that

will be solved by a bit of lightweight boning in the side seams and in

the front; it will be seen in the second mockup and the finished

waistcoat.

As for this one, it s definitely going to get some wear as is, in casual

contexts. Except. Well, it s a denim waistcoat, right? With a very

different cut from the get a denim jacket and rip out the sleeves , but

still a denim waistcoat, right? The kind that you cover in patches,

right?

The other thing that wasn t exactly as expected is the back: the pattern

splits the bottom part of the back to give it sufficient spring over

the hips . The book is probably published in 1892, but I had already

found when drafting the foundation skirt that its idea of hips

includes a bit of structure. The enough steel to carry a book or a cup

of tea kind of structure. I should have expected a lot of spring, and

indeed that s what I got.

To fit the bottom part of the back on the limited amount of fabric I had

to piece it, and I suspect that the flat felled seam in the center is

helping it sticking out; I don t think it s exactly bad, but it is

a peculiar look.

Also, I had to cut the back on the fold, rather than having a seam in

the middle and the grain on a different angle.

Anyway, my next waistcoat project is going to have a linen-cotton lining

and silk fashion fabric, and I d say that the pattern is good enough

that I can do a few small fixes and cut it directly in the lining, using

it as a second mockup.

As for the wrinkles, there is quite a bit, but it looks something that

will be solved by a bit of lightweight boning in the side seams and in

the front; it will be seen in the second mockup and the finished

waistcoat.

As for this one, it s definitely going to get some wear as is, in casual

contexts. Except. Well, it s a denim waistcoat, right? With a very

different cut from the get a denim jacket and rip out the sleeves , but

still a denim waistcoat, right? The kind that you cover in patches,

right?

The key idea of

The key idea of  Tightening the feedback loop Link to heading One thing we notice ever so often is that although Phosh s source code is publicly available and upcoming changes are open for review the feedback loop between changes being made to the development branch and users noticing the change can still be quiet long.

This can be problematic as we ideally want to catch a regression or broken use case triggered by a change on the development branch (aka main) before the general availability of a new version.

Tightening the feedback loop Link to heading One thing we notice ever so often is that although Phosh s source code is publicly available and upcoming changes are open for review the feedback loop between changes being made to the development branch and users noticing the change can still be quiet long.

This can be problematic as we ideally want to catch a regression or broken use case triggered by a change on the development branch (aka main) before the general availability of a new version.

I was working on what looked like a good pattern for a pair of

jeans-shaped trousers, and I knew I wasn t happy with 200-ish g/m

cotton-linen for general use outside of deep summer, but I didn t have a

source for proper denim either (I had been low-key looking for it for a

long time).

Then one day I looked at an article I had saved about fabric shops that

sell technical fabric and while window-shopping on one I found that they

had a decent selection of denim in a decent weight.

I decided it was a sign, and decided to buy the two heaviest denim they

had: a

I was working on what looked like a good pattern for a pair of

jeans-shaped trousers, and I knew I wasn t happy with 200-ish g/m

cotton-linen for general use outside of deep summer, but I didn t have a

source for proper denim either (I had been low-key looking for it for a

long time).

Then one day I looked at an article I had saved about fabric shops that

sell technical fabric and while window-shopping on one I found that they

had a decent selection of denim in a decent weight.

I decided it was a sign, and decided to buy the two heaviest denim they

had: a  The shop sent everything very quickly, the courier took their time (oh,

well) but eventually delivered my fabric on a sunny enough day that I

could wash it and start as soon as possible on the first pair.

The pattern I did in linen was a bit too fitting, but I was afraid I had

widened it a bit too much, so I did the first pair in the 100% cotton

denim. Sewing them took me about a week of early mornings and late

afternoons, excluding the weekend, and my worries proved false: they

were mostly just fine.

The only bit that could have been a bit better is the waistband, which

is a tiny bit too wide on the back: it s designed to be so for comfort,

but the next time I should pull the elastic a bit more, so that it stays

closer to the body.

The shop sent everything very quickly, the courier took their time (oh,

well) but eventually delivered my fabric on a sunny enough day that I

could wash it and start as soon as possible on the first pair.

The pattern I did in linen was a bit too fitting, but I was afraid I had

widened it a bit too much, so I did the first pair in the 100% cotton

denim. Sewing them took me about a week of early mornings and late

afternoons, excluding the weekend, and my worries proved false: they

were mostly just fine.

The only bit that could have been a bit better is the waistband, which

is a tiny bit too wide on the back: it s designed to be so for comfort,

but the next time I should pull the elastic a bit more, so that it stays

closer to the body.

I wore those jeans daily for the rest of the week, and confirmed that

they were indeed comfortable and the pattern was ok, so on the next

Monday I started to cut the elastic denim.

I decided to cut and sew two pairs, assembly-line style, using the

shaped waistband for one of them and the straight one for the other one.

I started working on them on a Monday, and on that week I had a couple

of days when I just couldn t, plus I completely skipped sewing on the

weekend, but on Tuesday the next week one pair was ready and could be

worn, and the other one only needed small finishes.

I wore those jeans daily for the rest of the week, and confirmed that

they were indeed comfortable and the pattern was ok, so on the next

Monday I started to cut the elastic denim.

I decided to cut and sew two pairs, assembly-line style, using the

shaped waistband for one of them and the straight one for the other one.

I started working on them on a Monday, and on that week I had a couple

of days when I just couldn t, plus I completely skipped sewing on the

weekend, but on Tuesday the next week one pair was ready and could be

worn, and the other one only needed small finishes.

And I have to say, I m really, really happy with the ones with a shaped

waistband in elastic denim, as they fit even better than the ones with a

straight waistband gathered with elastic. Cutting it requires more

fabric, but I think it s definitely worth it.

But it will be a problem for a later time: right now three pairs of

jeans are a good number to keep in rotation, and I hope I won t have to

sew jeans for myself for quite some time.

And I have to say, I m really, really happy with the ones with a shaped

waistband in elastic denim, as they fit even better than the ones with a

straight waistband gathered with elastic. Cutting it requires more

fabric, but I think it s definitely worth it.

But it will be a problem for a later time: right now three pairs of

jeans are a good number to keep in rotation, and I hope I won t have to

sew jeans for myself for quite some time.

I think that the leftovers of plain denim will be used for a skirt or

something else, and as for the leftovers of elastic denim, well, there

aren t a lot left, but what else I did with them is the topic for

another post.

Thanks to the fact that they are all slightly different, I ve started to

keep track of the times when I wash each pair, and hopefully I will be

able to see whether the elastic denim is significantly less durable than

the regular, or the added weight compensates for it somewhat. I m not

sure I ll manage to remember about saving the data until they get worn,

but if I do it will be interesting to know.

Oh, and I say I ve finished working on jeans and everything, but I still

haven t sewn the belt loops to the third pair. And I m currently wearing

them. It s a sewist tradition, or something. :D

I think that the leftovers of plain denim will be used for a skirt or

something else, and as for the leftovers of elastic denim, well, there

aren t a lot left, but what else I did with them is the topic for

another post.

Thanks to the fact that they are all slightly different, I ve started to

keep track of the times when I wash each pair, and hopefully I will be

able to see whether the elastic denim is significantly less durable than

the regular, or the added weight compensates for it somewhat. I m not

sure I ll manage to remember about saving the data until they get worn,

but if I do it will be interesting to know.

Oh, and I say I ve finished working on jeans and everything, but I still

haven t sewn the belt loops to the third pair. And I m currently wearing

them. It s a sewist tradition, or something. :D

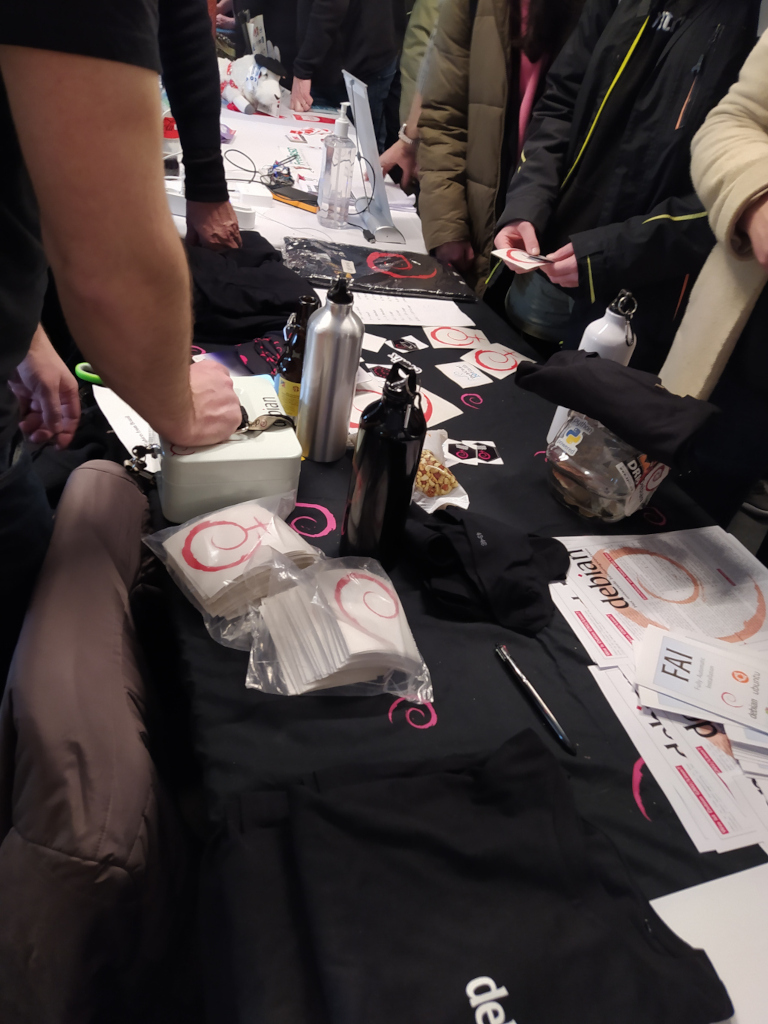

The talks held in the room were these below, and in each of them you can watch the recording video.

The talks held in the room were these below, and in each of them you can watch the recording video.

As there has been an increase in the number of proposals received, I believe that interest in the translations devroom is growing. So I intend to send the devroom proposal to FOSDEM 2025, and if it is accepted, wait for the future Debian Leader to approve helping me with the flight tickets again. We ll see.

As there has been an increase in the number of proposals received, I believe that interest in the translations devroom is growing. So I intend to send the devroom proposal to FOSDEM 2025, and if it is accepted, wait for the future Debian Leader to approve helping me with the flight tickets again. We ll see.

This version mostly just updates to the newest releases of

This version mostly just updates to the newest releases of  Two months into my

Two months into my